How Astronomy and AI are solving each other’s problems

Astronomy is sitting on a cosmic treasure chest. That treasure is data—petabytes of it. To put that in perspective, a petabyte can hold about 200,000 high-definition movies, 210 million songs, or 256 million high-resolution photos.

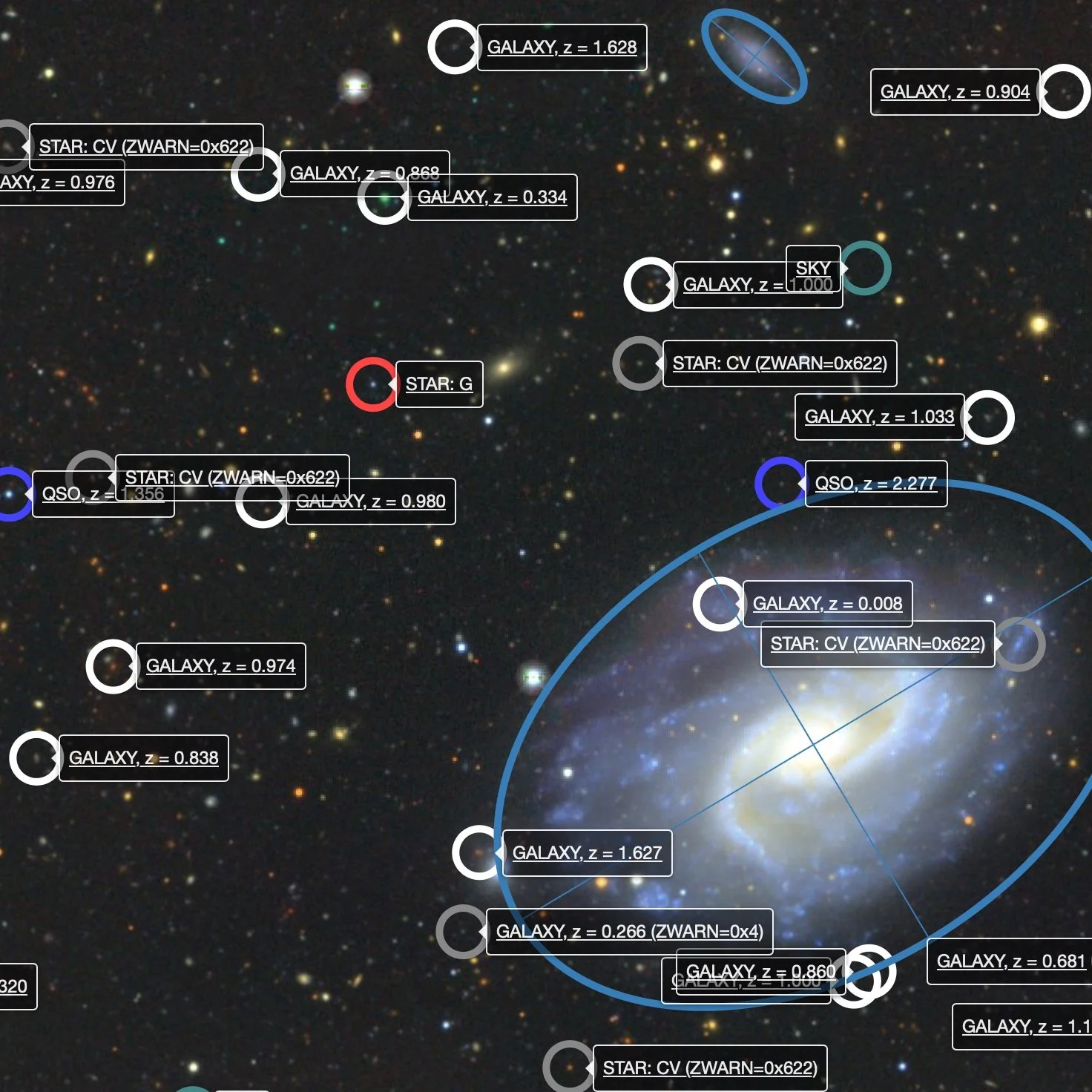

“Astronomers are really good at creating a lot of data really, really fast,” says Eric Murphy, an astronomer at the National Radio Astronomy Observatory (NRAO). And he says the data have some irresistible qualities: They’re free to use, safe, visual, messy, and immense. Essentially, this treasure house is perfect for computer scientists who need massive datasets to train their artificial intelligence (AI) models but often are stuck with overly simplistic data locked behind paywalls. Astronomy also provides a low-risk environment to test AI models: If an algorithm misclassifies a galaxy, nobody gets hurt.

It’s a match made in the cosmos: Astronomy gets shiny new tools to process its mountains of data, and AI gets a safe playground filled with exciting toys to improve its methods.

Enter the NSF-Simons AI Institute for Cosmic Origins (CosmicAI), which was established in 2024 to cultivate this symbiotic relationship.

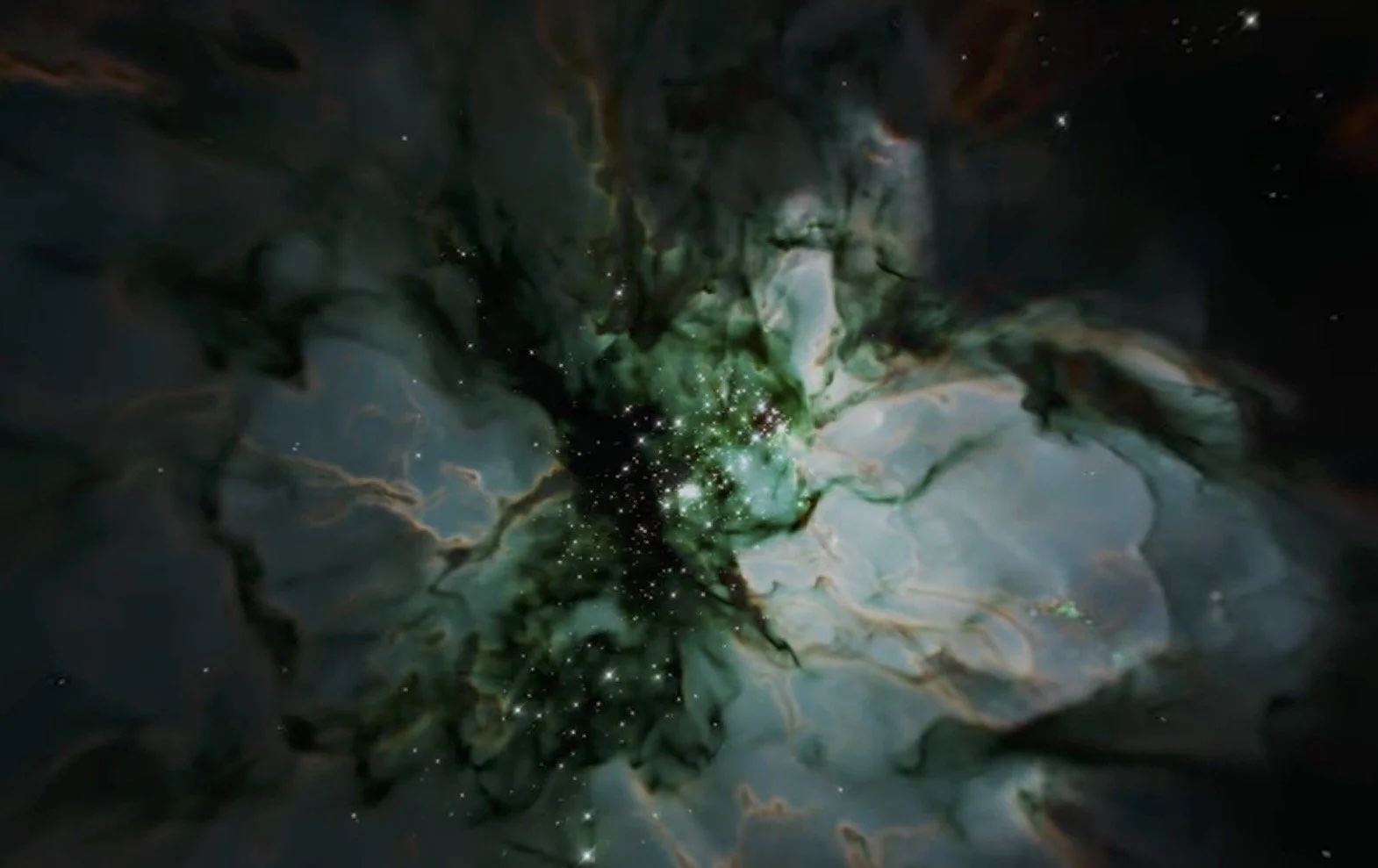

“The CosmicAI Institute was envisioned as a way to inspire people, gain a better understanding of the universe, develop innovative open-source AI tools for astronomy, and create educational opportunities,” says CosmicAI Director Stella Offner, a professor of astronomy and faculty member in the Oden Institute for Computational Engineering and Sciences, both at The University of Texas at Austin. Offner says that, at the heart of astronomy, lies one of humanity’s most fundamental questions: Where do we come from?

Spurred by a 2023 executive order about the development of secure and trustworthy AI, the National Science Foundation (NSF) and the Simons Institute (an organization dedicated to studying theoretical computer science) jointly announced a funding call for two national AI institutes in the astronomical sciences. With 25 other AI institutes under its belt, NSF was ready to expand into astronomy’s gold mine. Offner seized the opportunity and CosmicAI was born.

CosmicAI consists of eight partners across the country: UT Austin, the Texas Advanced Computing Center, the University of Virginia, the University of Utah, New York University, and the University of California, Los Angeles, along with two nationally funded astronomy centers, NRAO and the National Optical-Infrared Astronomy Research Laboratory (NOIRLab). Private collaborators, including researchers at the nonprofit Allen Institute for Artificial Intelligence, also support CosmicAI. Inside the institute, you’ll find astronomers, computer scientists, mathematicians, chemists, linguists, and physicists, each speaking their own language like a modern Tower of Babel.

Their mission revolves around four grand AI challenges: trustworthiness, efficiency, interpretability, and robustness. A working group tackles each of these challenges: the Observable Universe (efficiency), Explorable Universe (trustworthiness), Accelerated Universe (robustness), and Explainable Universe (interpretability). An AI expert and an astronomer lead each group. A year and a half in, the institute is creating better AI tools for astronomy while attempting to address such fundamental mysteries as the nature of dark matter and the origin of life. But there’s a catch: the treasure is arriving faster than anyone can process it.

Efficiency: The Observable Universe

Eric Murphy, an astronomer at NRAO and co-principal investigator for CosmicAI, leads the Observable Universe effort. And he says he’s drowning in data.

The Atacama Large Millimeter/submillimeter Array (ALMA), an array of 66 radio telescopes, sits atop one of the tallest plateaus in a remote desert in northern Chile. It observes low-frequency electromagnetic radiation, collecting data 24 hours a day, 365 days a year.

Murphy says that in the near future, ALMA will be upgraded to increase its sensitivity, allowing it to see even deeper into the universe.